Confusion between these two terms often leads to a lot of inaccurate assumptions about the way the world works. We notice two things happening at the same time (correlation) and mistakenly conclude that one causes the other (causation). We then often act upon that erroneous conclusion, making decisions that can have immense influence across our lives. The problem is, without a good understanding of what is meant by these terms, these decisions fail to capitalize on real dynamics in the world and instead are successful only by luck.

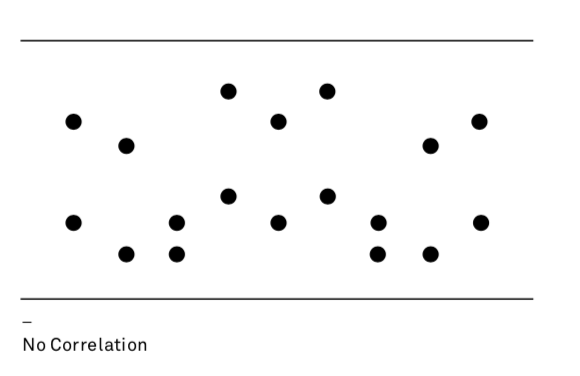

No Correlation

The correlation coefficient between two measures, which varies between -1 and 1, is a measure of the relative weight of the factors they share. For example, two phenomena with few factors shared, such as bottled water consumption versus suicide rate, should have a correlation coefficient of close to 0. That is to say, if we looked at all countries in the world and plotted suicide rates of a specific year against per capita consumption of bottled water, the plot would show no pattern at all.

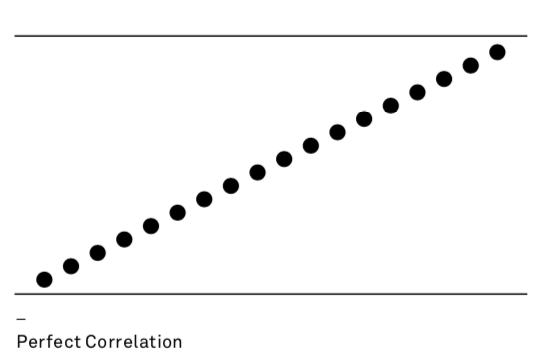

Perfect Correlation

On the contrary, there are measures which are solely dependent on the same factor. A good example of this is temperature. The only factor governing temperature—velocity of molecules—is shared by all scales. Thus, each degree in Celsius will have exactly one corresponding value in Fahrenheit. Therefore, temperature in Celsius and Fahrenheit will have a correlation coefficient of 1 and the plot will be a straight line.

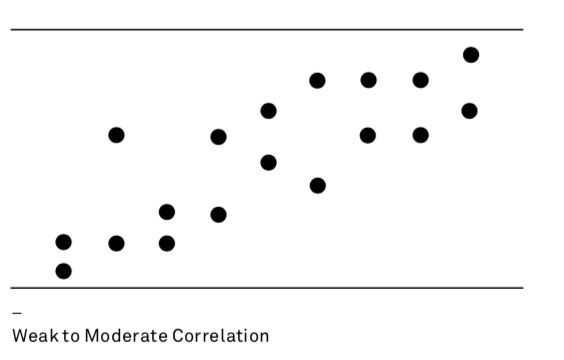

Weak to Moderate Correlation

There are few phenomena in human sciences that have a correlation coefficient of 1. There are, however, plenty where the association is weak to moderate and there is some explanatory power between the two phenomena. Consider the correlation between height and weight, which would land somewhere between 0 and 1. While virtually every three-year-old will be lighter and shorter than every grown man, not all grown men or three-year-olds of the same height will weigh the same.

This variation and the corresponding lower degree of correlation implies that, while height is generally speaking a good predictor, there clearly are factors other than height at play.

In addition, correlation can sometimes work in reverse. Let’s say you read a study that compares alcohol consumption rates in parents and their corresponding children’s academic success. The study shows a relationship between high alcohol consumption and low academic success. Is this a causation or a correlation? It might be tempting to conclude a causation, such as the more parents drink, the worse their kids do in school.

However, this study has only demonstrated a relationship, not proved that one causes the other. The factors correlate—meaning that alcohol consumption in parents has an inverse relationship with academic success in children. It is entirely possible that having parents who consume a lot of alcohol leads to worse academic outcomes for their children. It is also possible, however, that the reverse is true, or even that having kids who do poorly in school causes parents to drink more. Trying to invert the relationship can help you sort through claims to determine if you are dealing with true causation or just correlation.

Causation

Whenever correlation is imperfect, extremes will soften over time. The best will always appear to get worse and the worst will appear to get better, regardless of any additional action. This is called regression to the mean, and it means we have to be extra careful when diagnosing causation. This is something that the general media and sometimes even trained scientists fail to recognize. Consider the example Daniel Kahneman gives in Thinking Fast and Slow:

Depressed children treated with an energy drink improve significantly over a three-month period. I made up this newspaper headline, but the fact it reports is true: if you treated a group of depressed children for some time with an energy drink, they would show a clinically significant improvement. It is also the case that depressed children who spend some time standing on their head or hug a cat for twenty minutes a day will also show improvement.

Whenever coming across such headlines it is very tempting to jump to the conclusion that energy drinks, standing on the head, or hugging cats are all perfectly viable cures for depression. These cases, however, once again embody the regression to the mean:

Depressed children are an extreme group, they are more depressed than most other children—and extreme groups regress to the mean over time. The correlation between depression scores on successive occasions of testing is less than perfect, so there will be regression to the mean: depressed children will get somewhat better over time even if they hug no cats and drink no Red Bull.

We often mistakenly attribute a specific policy or treatment as the cause of an effect, when the change in the extreme groups would have happened anyway. This presents a fundamental problem: how can we know if the effects are real or simply due to variability?

Luckily there is a way to tell between a real improvement and something that would have happened anyway. That is the introduction of the so-called control group, which is expected to improve by regression alone. The aim of the research is to determine whether the treated group improves more than regression can explain.

In real life situations with the performance of specific individuals or teams, where the only real bench- mark is the past performance and no control group can be introduced, the effects of regression can be difficult if not impossible to disentangle. We can compare against industry average, peers in the cohort group or historical rates of improvement, but none of these are perfect measures.

Source: The Great Mental Models Volume 1: General Thinking Concepts

*This is an excerpt from our book, The Great Mental Models: General Thinking Concepts. Learn more about the book and how to get it here.