“What a Man wishes, he will also believe” – Demosthenes

Bias from overconfidence is a natural human state. All of us believe good things about ourselves and our skills.

In Seeking Wisdom, Peter Bevelin writes:

Most of us believe we are better performers, more honest and intelligent, have a better future, have a happier marriage, are less vulnerable than the average person, etc. But we can’t all be better than average.

This inherent base rate of overconfidence is especially strong when projecting our beliefs about our future. Over-optimism is a form of overconfidence. Bevelin again:

We tend to Overestimate our ability to predict the future. People tend to put a higher probability on desired events than undesired events.

The bias from overconfidence is insidious because of how many factors can create and inflate it. Emotional, cognitive, and social factors all influence it. Emotional, as we see, because of the emotional pain of believing bad things about ourselves or our lives.

Emotional and Cognitive distortion that creates overconfidence is the dangerous and unavoidable accompaniment to any form of success.

Roger Lowenstein writes in When Genius Failed, “there is nothing like success to blind one to the possibility of failure.”

In Seeking Wisdom, Bevelin adds:

What tends to inflate the price that CEOs pay for acquisitions? Studies found evidence of infection through three sources of hubris: 1) overconfidence after recent success, 2) a sense of self-importance; the belief that a high salary compared to other senior ranking executives implies skill, and 3) the CEOs belief in their own press coverage. The media tend to glorify the CEO and over-attribute business success to the role of the CEO rather than to other factors and people. This makes CEOs more likely to become both more overconfident about their abilities and more committed to the actions that made them media celebrities.

This isn’t an effect confined to CEOs and large transactions. This feedback loop happens every day between employees and their managers. Or between students and professors, even peers and spouses.

Perhaps the most surprising, pervasive, and dangerous reinforcer of Overconfidence is the social incentives. Take a look at this example of social pressures on doctors from Kahneman in Thinking, Fast and Slow:

Generally, it is considered a weakness and a sign of vulnerability for clinicians to appear unsure. Confidence is valued over uncertainty and there is a prevailing censure against disclosing uncertainty to patients.

An unbiased appreciation of uncertainty is a cornerstone of rationality—but that is not what people and organizations want. Extreme uncertainty is paralyzing under dangerous circumstances, and the admission that one is merely guessing is especially unacceptable when the stakes are high. Acting on pretended knowledge is often the preferred solution.

And what about those who don’t succumb to this social pressure to let the Overconfidence bias run wild?

Kahneman writes, “Experts who acknowledge the full extent of their ignorance may expect to be replaced by more confident competitors, who are better able to gain the trust of the clients.”

It’s important to structure environments that allow for uncertainty, or the system will reward the most overconfident, not the most rational, of the decision-makers.

Making perfect forecasts isn’t the goal — self-awareness is, in the form of wide confidence intervals. Kahneman, again in Thinking, Fast and Slow, writes:

For a number of years, professors at Duke University conducted a survey in which the chief financial officers of large corporations estimated the results of the S&P index over the following year. The Duke scholars collected 11,600 such forecasts and examined their accuracy. The conclusion was straightforward: financial officers of large corporations had no clue about the short-term future of the stock market; the correlation between their estimates and the true value was slightly less than zero! When they said the market would go down, it was slightly more likely than not that it would go up. these findings are not surprising. The truly bad news is that the CFOs did not appear to know that their forecasts were worthless.

You don’t have to be right. You just have to know that you’re not very likely to be right.

As always, with the lollapalooza effect of overlapping, combining, and compounding psychological effects, this one has powerful partners in some of our other mental models. Overconfidence bias is often caused or exacerbated by: doubt-avoidance, inconsistency-avoidance, incentives, denial, believing-first-and-doubting-later, and the endowment effect.

Restraining Overconfidence

One way to restrain our tendency toward overconfidence is to habitually position ourselves in such a way that renders a prediction of the future unnecessary.

You might not know with certainty when the 100-year flood is coming, but you do know with certainty it will come. And that means you should leave yourself positioned accordingly.

The purpose of positioning is to make prediction unnecessary. You are prepared, or you are not. The argument for not preparing is one of efficiency. It’s more efficient to only prepare when you need to. Being prepared all the time carries a cost.

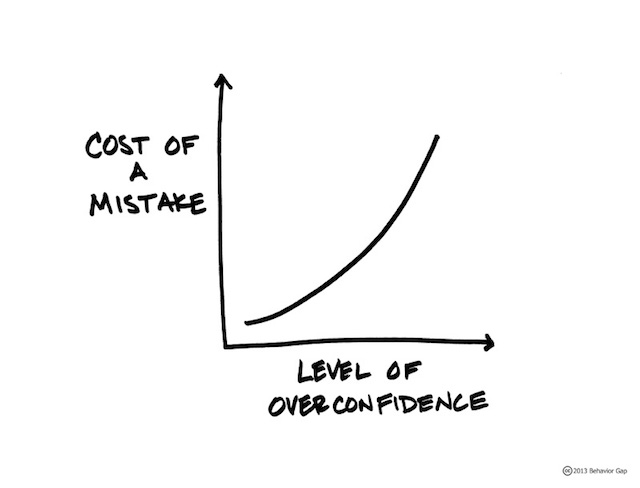

Overconfidence lulls you into a false sense of security about the future and ensures your position is weakest at the very moment you need it to be strongest. When the storm comes, it won’t give you a warning.

And in Seeking Wisdom, Bevelin reminds us that “Overconfidence can cause unreal expectations and make us more vulnerable to disappointment.” A few sentences later, he advises us to “focus on what can go wrong and the consequences.”

Build in some margin of safety in decisions. Know how you will handle things if they go wrong. Surprises occur in many unlikely ways. Ask: How can I be wrong? Who can tell me if I’m wrong?

Bias from Overconfidence is a Farnam Street mental model.